Q3'24: Technology Update – Low Precision and Model Optimization

Authors

Alexander Kozlov, Nikita Savelyev, Vui Seng Chua, Souvikk Kundu, Nikolay Lyalyushkin, Andrey Anufriev, Pablo Munoz, Alexander Suslov, Liubov Talamanova, Yury Gorbachev, Nilesh Jain, Maxim Proshin

Summary

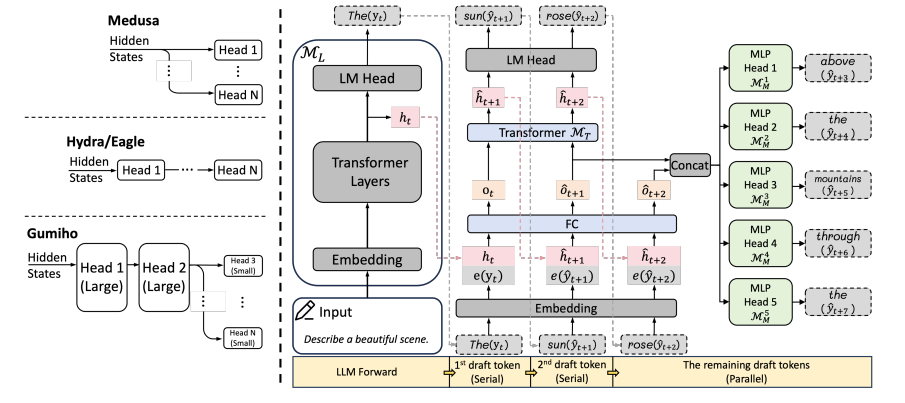

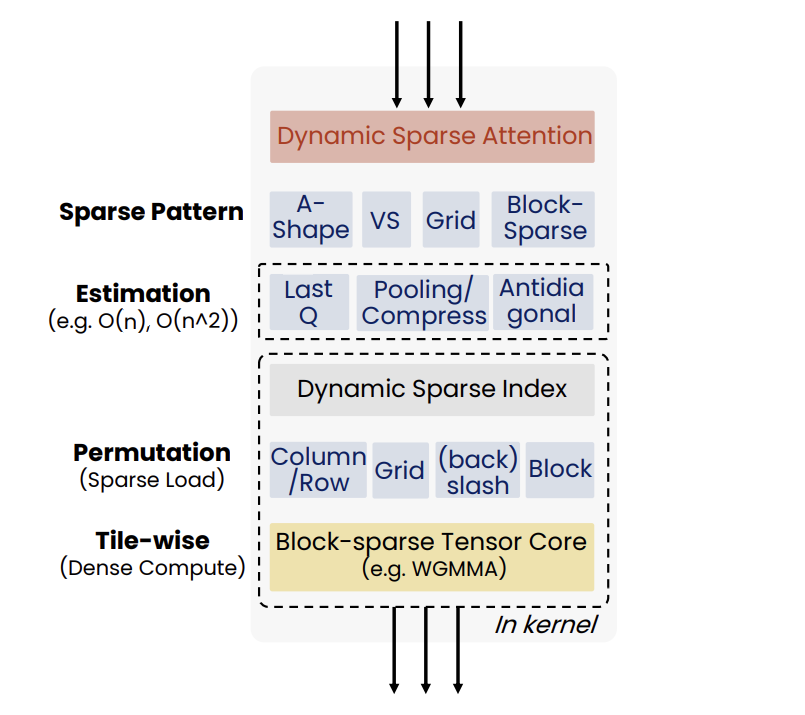

This quarter, we continue observing the trendon the optimization of LLM-based pipelines. Besides a high interest in weight quantizationto precisions beyond 4-bits, we see a lot of effort in the optimization of usageof KV-cache during the ScaledDotProduct computation: from KV-cache quantizationand decomposition to sparse attention where only a part of KV-cache is used topredict the next token. This gives the opportunity to design more efficientinference pipelines with heterogeneous execution (see RetrievalAttention work).

Highlights

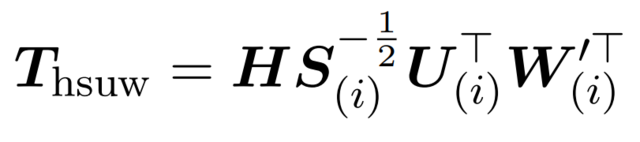

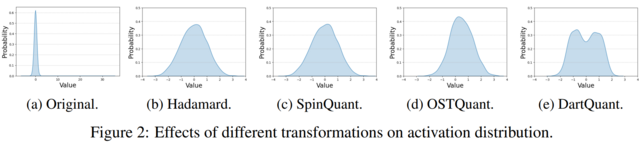

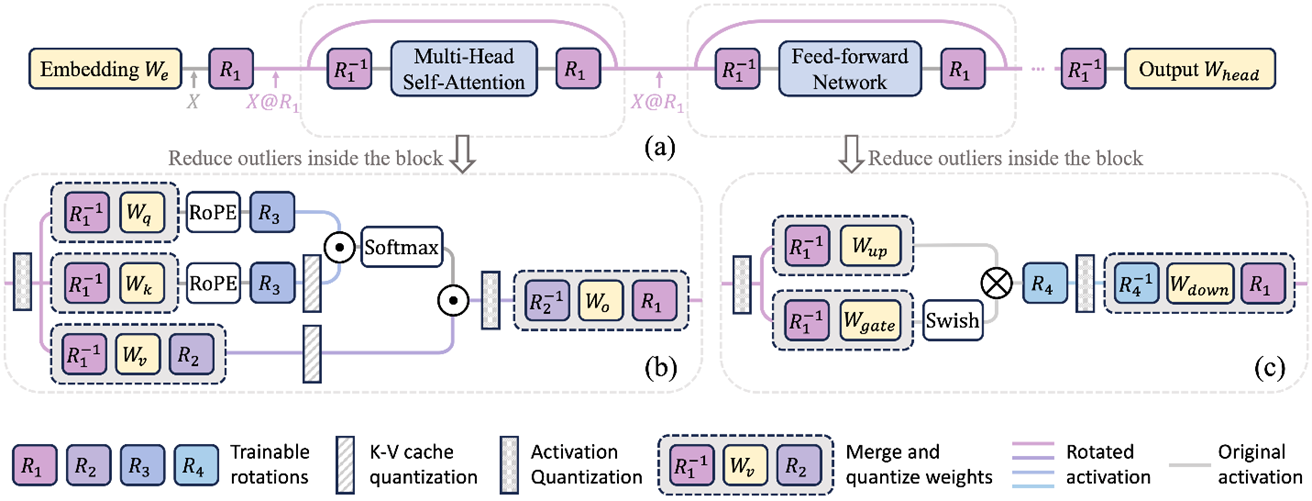

- SpinQuant: LLM Quantizationwith Learned Rotations by Meta (https://arxiv.org/abs/2405.16406). Develop the idea of rotation by a random orthogonal matrix from QuIP, QuIP#, and QuaRotto reduce outliers in the LLMs and obtain better quality of W4A4KV4 quantization. The authors found that not all rotations help equally, and random rotations produce a significant variance in quantized models. Therefore, it is proposed to search for “good” rotation matrices using optimization with Cayley optimization. The matrix optimization procedure takes a little over an hour on smaller representatives of the LLama family on 8 A100 and half a day for 70B models. Regarding quality, they are ahead of baselines (the closest QuaRot is about 1% on average). Adding a rotation inside FFN gives the most significant gain. Code is available: https://github.com/facebookresearch/SpinQuant.

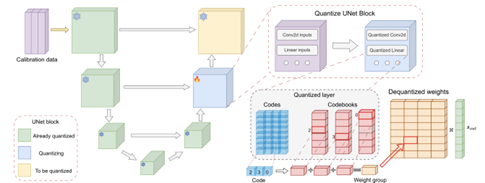

- ACCURATE COMPRESSION OFTEXT-TO-IMAGE DIFFUSION MODELS VIA VECTOR QUANTIZATION by Yandex Research, HSE University, Skoltech, MIPT, Neural Magic, IST Austria (https://arxiv.org/pdf/2409.00492).The authors explore vector-based PTQ strategies for text-to-image diffusion models and demonstrate that the compressed models yield higher quality text-to-image generation than the scalar alternatives under the same bit-widths. They describe an effective fine-tuning technique that further closes the gap between the full-precision and compressed models, leveraging the flexibility of the vector quantized representation. To showcase the method, they compress the weights of SDXL down to 3 bits per parameter. Extensive human evaluation and automated metrics confirm the superiority of our approach over previous diffusion compression methods under the same bit-widths. The authors illustrate that the approach can be effectively applied to distilled diffusion models, such as SDXL, which achieve nearly lossless 4-bit compression. Code is available at https://github.com/yandex-research/vqdm.

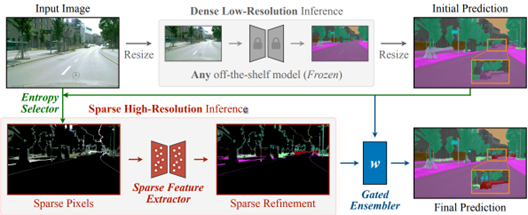

- Sparse Refinement for Efficient High-Resolution Semantic Segmentation by MIT, NVIDIA, Tsinghua University, University of Toronto, UC Berkeley (https://arxiv.org/pdf/2407.19014). Authors introduce a novel approach that enhances dense low-resolution predictions with sparse high-resolution refinements. Based on coarse low-resolution outputs, the method first uses an entropy selector to identify a sparse set of pixels with high entropy. It then employs a sparse feature extractor to generate the refinements for those pixels of interest. Finally, it leverages a gated ensembler to apply these sparse refinements to the initial coarse predictions. The method can be seamlessly integrated into any existing semantic segmentation model, regardless of CNN- or ViT-based. SparseRefine achieves significant speedup: 1.5 to 3.7 times when applied to HRNet-W48, SegFormer-B5, Mask2Former-T/L and SegNeXt-L on Cityscapes, with negligible to no loss of accuracy.

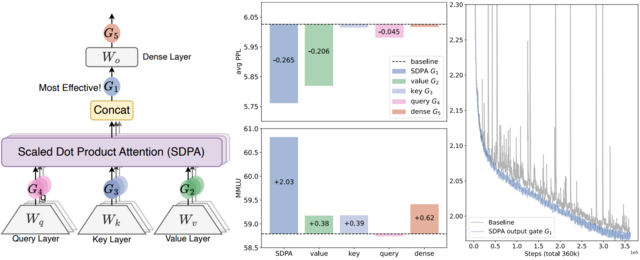

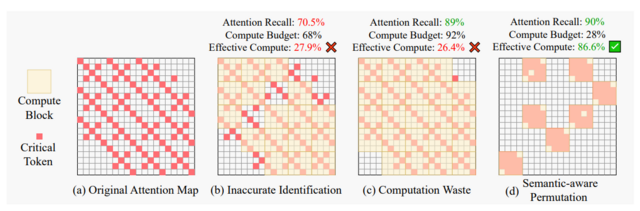

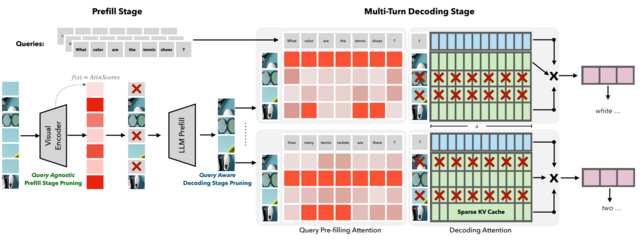

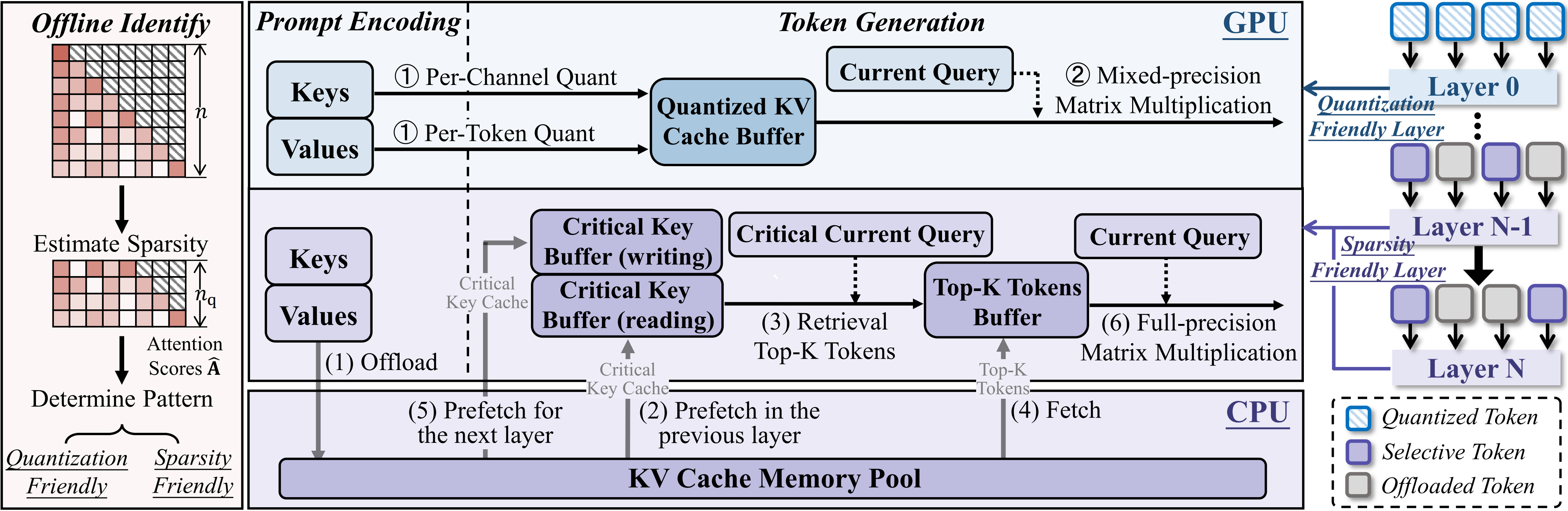

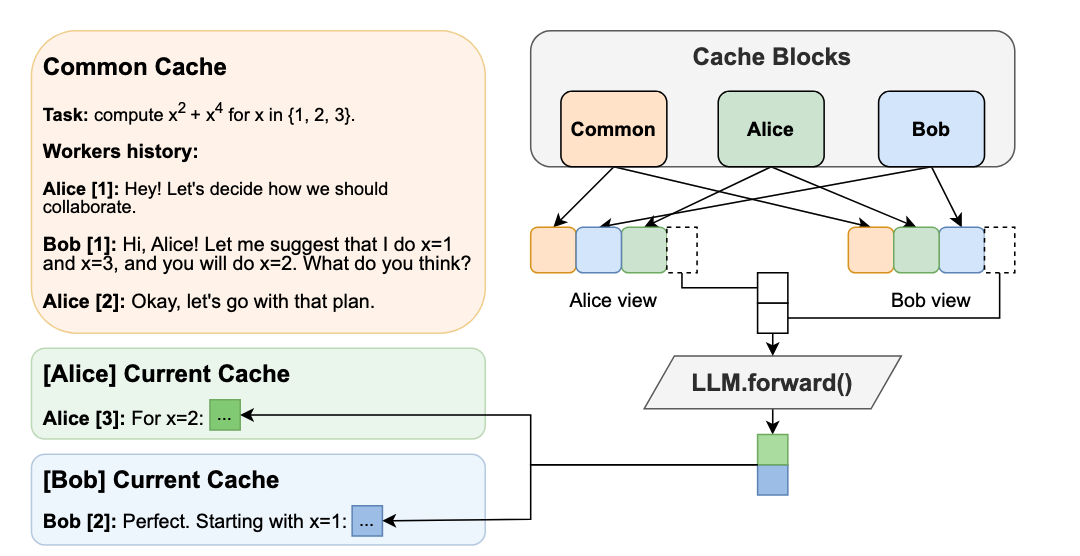

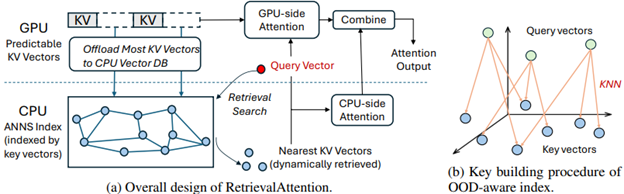

- RetrievalAttention: Accelerating Long-Context LLM Inference via Vector Retrieval by Microsoft Research, Shanghai Jiao Tong University, Fudan University (https://arxiv.org/pdf/2409.10516). Authors employ dynamic sparse attention during token generation, allowing the most critical tokens to emerge from the extensive context data. To address theOOD issue, the method constructs a vector index tailored for the attention mechanism, focusing on the distribution of queries rather than key similarities. This approach allows for traversal of only a small subset of key vectors (1% to 3%), effectively identifying the most relevant tokens to achieve accurate attention scores and results. To optimize resource utilization, RetrievalAttention retains KV vectors in the GPU memory following static patterns while offloading the majority of KV vectors to CPU memory for index construction. This strategy enables RetrievalAttention to perform attention computation with reduced latency and minimal GPU memory utilization. The method shows SOTA results in terms of latency-performance.

Papers with notable results

Quantization

- ADFQ-ViT: Activation-Distribution-Friendly Post-Training Quantization for Vision Transformers by Chinese universities (https://arxiv.org/pdf/2407.02763). Authors design the Per-Patch Outlier-aware Quantizer and the Shift-Log2 Quantizer, which addresses the challenges of outliers and irregular distributions in post-LayerNorm activations and the non-uniform distribution of positive and negative values in post-GELU activations. They also introduce the attention-score enhanced module-wise optimization, which optimizes the parameters of the weight and activation quantizer to reduce errors before and after quantization. The method shows very good results for various Vision Transformer models and use cases at W4A4 and W6A6 setups.

- How Does Quantization Affect Multilingual LLMs? by Cohere (https://arxiv.org/pdf/2407.03211). The authors investigate the problem of LLM accuracy degradation after quantization. They use automatic benchmarks, LLM-as-a-Judge methods, and human evaluation, finding that (1) harmful effects of quantization are apparent in human evaluation, and automatic metrics severely underestimate the detriment: a 1.7%average drop in Japanese across automatic tasks corresponds to a 16.0% drop reported by human evaluators on realistic prompts; (2) languages are disparately affected by quantization, with non-Latin script languages impacted worst; and (3) challenging tasks such as mathematical reasoning degrade fastest.

- CLAMP-ViT: Contrastive Data-Free Learning for Adaptive Post-Training Quantization of ViTs by Georgia Institute of Technology and Intel Labs (https://arxiv.org/pdf/2407.05266). The authors incorporate a patch-level contrastive learning scheme to generate richer, semantically meaningful data. Furthermore, they leverage contrastive learning in layer-wise evolutionary search for fixed- and mixed-precision quantization to identify optimal quantization parameters while mitigating the effects of a non-smooth loss landscape. Evaluations across various vision tasks demonstrate the superiority of CLAMP-ViT, with performance improvements of up to 3% in top-1 accuracy for classification, 0.6 mAP for object detection, and 1.5 mIoU for segmentation at a similar or better compression ratio over existing alternatives. The code is available at https://github.com/georgia-tech-synergy-lab/CLAMP-ViT.git.

- RoLoRA: Fine-tuning Rotated Outlier-free LLMs for Effective Weight-Activation Quantization by Hong Kong University of Science and Technology and Meta Reality Labs (https://arxiv.org/pdf/2407.08044).The paper proposes RoLoRA, the scheme for weight-activation quantization. RoLoRA utilizes rotation for outlier elimination and proposes rotation-aware fine-tuning to preserve the outlier-free characteristics in rotated LLMs. Experimental results show RoLoRA consistently improves low-bit LoRA convergence and post-training quantization robustness in weight-activation settings. The code is supposed to be available at https://github.com/HuangOwen/RoLoRA.

- LRQ: Optimizing Post-Training Quantization for Large Language Models by Learning Low-Rank Weight-Scaling Matrices by NAVER Cloud, KAIST AI, AITRICS, SNU AI Center (https://arxiv.org/pdf/2407.11534). The authors propose a post-training weight quantization method for LLMs that reconstructs the outputs of an intermediate Transformer block by leveraging low-rank weight-scaling matrices, replacing the conventional full weight-scaling matrices that entail as many learnable scales as their associated weights. Thanks to parameter sharing via low-rank structure, the method only needs to learn significantly fewer parameters while enabling the individual scaling of weights, thus boosting the generalization capability of quantized LLMs. Authors show the superiority of the method over prior LLM PTQ works under (i) 8-bit weight and per-tensor activation quantization, (ii) 4-bitweight and 8-bit per-token activation quantization, and (iii) low-bitweight-only quantization schemes. The code is available at https://github.com/onliwad101/FlexRound_LRQ.

- AdaLog: Post-Training Quantization for Vision Transformers with Adaptive Logarithm Quantizer by Beihang University (https://arxiv.org/pdf/2407.12951). The paper proposes a non-uniform quantizer that optimizes the logarithmic base to accommodate the power-law-like distribution of activations while simultaneously allowing for hardware-friendly quantization and dequantization. By employing the bias reparameterization, the quantizer is applicable to both the post-Softmax and post-GELU activations. The authors also develop an efficient Fast Progressive Combining Search (FPCS) strategy to determine the optimal logarithm base, as well as the scaling factors and zero points for the uniform quantizers. Experimental results on public benchmarks demonstrate promising results for various ViT-based architectures and vision tasks, especially in the W6A6setup. The code is available at https://github.com/GoatWu/AdaLog.

- RECLAIMING RESIDUAL KNOWLEDGE: A NOVEL PARADIGM TO LOW-BITQUANTIZATION by Irish Universities (https://arxiv.org/pdf/2408.00923). The authors present an efficient, low-bit, and PTQ framework for ConvNets by framing optimal quantization as an architecture search problem to re-capture quantization residual knowledge with low-rank adapters. They introduce a differentiable neural combinatorial optimization approach, searching for the optimal low-rank adapters using a smooth, high-order normalized Butterworth kernel. They also show a result, converting the weights of existing high-rank quantization residual convolutional operators to low-rank adapters without training. The method achieves good 4-bit and 3-bit quantization results by using less than 250 iterations on a small calibration set with 1600 images. Code will be open-sourced.

- VQ4DiT: Efficient Post-Training Vector Quantization for Diffusion Transformers by Zhejiang University and vivo Mobile Communication (https://arxiv.org/pdf/2408.17131). The authors explore the Vector Quantization methods for extremely low bit-width DiTs and introduce DiT-specific improvements for better quantization. They calibrate both the codebook and the assignments of each layer simultaneously. The proposed method calculates the candidate assignment set for each weight sub-vector based on Euclidean distance and reconstructs the sub-vector based on the weighted average. Then, using the zero-data and block-wise calibration method, the optimal assignment from the set is efficiently selected while calibrating the codebook. The method achieves competitive evaluation results compared to full-precision models on the ImageNet.

- MobileQuant: Mobile-friendly Quantization for On-device Language Models by Samsung AI Center, Cambridge (https://arxiv.org/pdf/2408.13933). The authors introduce a post-training quantization approach for LLMs that is supported by current mobile hardware implementations (i.e., DSP, NPU), thus being directly deployable on real-edge devices. The method improves upon prior works through simple yet effective methodological extensions that enable us to effectively quantize most activations to a lower bit-width (i.e., 8-bit) with near-lossless performance. They conduct an on-device evaluation of model accuracy, inference latency, and energy consumption. The results indicate that the proposed method reduces inference latency and energy usage by 20%-50% while still maintaining accuracy compared to models using 16-bit activations.

- Low-Bit width Floating Point Quantization for Efficient High-Quality Diffusion Models by the University of Toronto & Vector Institute (https://arxiv.org/pdf/2408.06995).The authors propose a floating-point quantization method for diffusion models that provides better image quality compared to integer quantization methods. They employ a floating-point quantization method by integrating weight rounding learning during the mapping of the full-precision values to the quantized values in the quantization process. The authors also study integer and floating-point quantization methods in state-of-the-art diffusion models. Additionally, they introduce a methodology to evaluate quantization effects, highlighting shortcomings with existing output quality metrics and experimental methodologies. Finally, their floating-point quantization method increases model sparsity by an order of magnitude, enabling further optimization opportunities.

- DopQ-ViT: Towards Distribution-Friendly and Outlier-Aware Post-Training Quantization for Vision Transformers by Institute of Automation and School of Artificial Intelligence of Chinese Academy of Sciences (https://arxiv.org/pdf/2408.03291v2).The paper focuses on the full quantization of Vision Transformers. The authors propose using the Tan Quantizer, which focuses more on values near 1, thereby better fitting the distribution of post-Softmax activations in Transformer layers. Besides, the method selects the median as the optimal scaling factor, effectively addressing the accuracy degradation issue that occurs after parametrizing post-LayerNorm activations. The method achieves very accurate results especially in W6/A6 for various tasks such as ImageNet or MS COCO.

- Differentiable Product Quantization for Memory Efficient Camera Relocalization by Czech Technical University in Prague, Aalto University, University of Oulu (https://arxiv.org/pdf/2407.15540).The authors introduce a simple and standalone metric learning for Differentiable Product Quantization for 3D scene compression that preserves matching properties of the descriptors and the final camera localization performance; ii) the proposed hybrid method enables a better tradeoff between memory complexity and localization; iii) they analyze the tradeoffs between description and map compression and show how localization is more tolerant to description compression on outdoor and indoor datasets. The code will be publicly available at https://github.com/AaltoVision/dpqe.

- Advancing Multimodal Large Language Models with Quantization-Aware Scale Learning for Efficient Adaptation by Xiamen University and SkyWork AI (https://arxiv.org/pdf/2408.03735).The paper introduces a Quantization-aware scale Learning method based on multimodal warmup. This method is grounded in two key innovations: (1) The learning of group-wise scale factors for quantized LLM weights to mitigate the quantization error arising from activation outliers and achieve more effective vision-language instruction tuning; (2) The implementation of a multimodal warmup that progressively integrates linguistic and multimodal training samples, thereby preventing overfitting of the quantized model to multimodal data while ensuring stable adaptation of multimodal large language models to downstream vision-language tasks. The code is supposed to be available at https://github.com/xjjxmu/QSLAW.

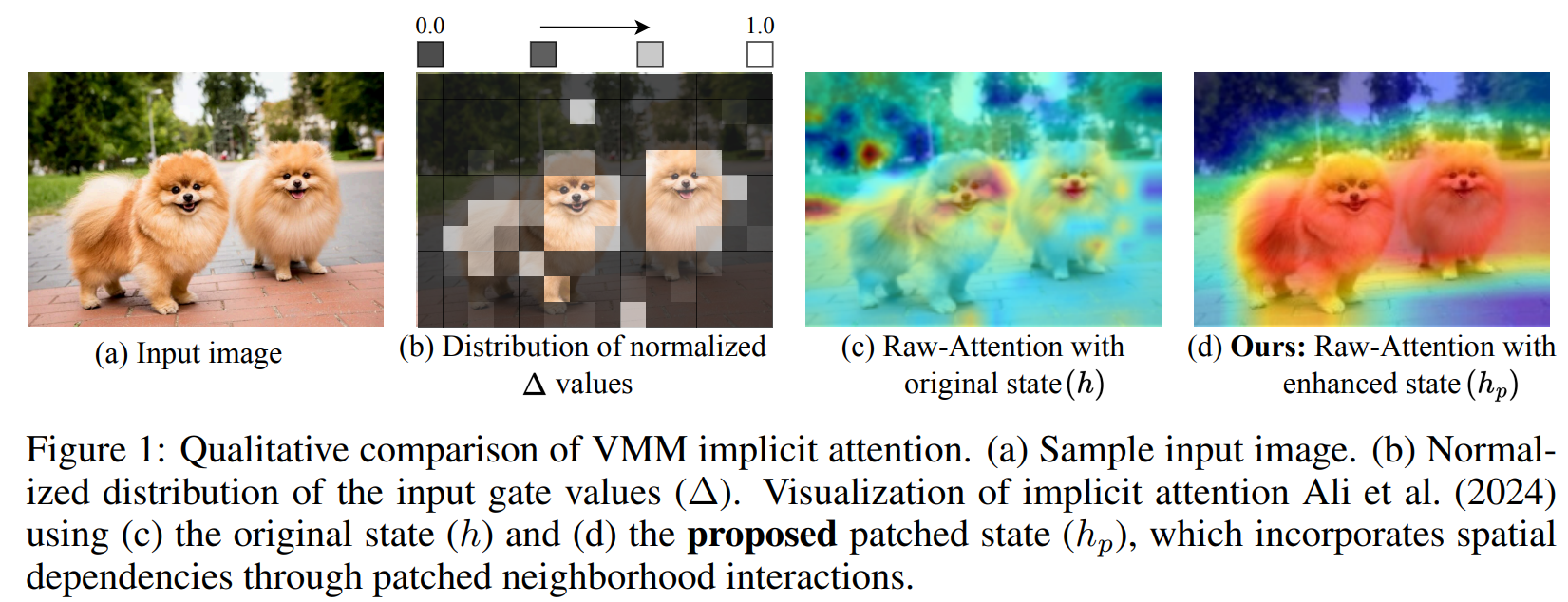

- Mamba-PTQ: Outlier Channels in Recurrent Large Language Models by Intel Labs (https://arxiv.org/pdf/2407.12397).This workshop paper is among the first to study post-training quantization on the Mamba architecture. Similar to Transformer models, it observed the presence of outlier channels in activations (those with absolute maximum values exceeding 6 standard deviations from the layer mean) and found that downstream task performance degrades substantially when these channels are removed. The study presents zero-shot results of naïve symmetrical per-tensor quantization of weights and activations across Mamba1 models, ranging from 130M to 2.8B parameters, providing a baseline for future quantization research on this emerging architecture.

- Foundation of Large Language Model Compression – Part 1: Weight Quantization by CSAIL MIT (https://arxiv.org/pdf/2409.02026).This work introduces CVXQ, a post-training weight quantization framework that assigns varying bit widths down to the per-group level, constrained by a target average bit rate per weight element. Formulated through the lens of Lagrangian convex optimization, the framework leads to a dual-ascent methods that alternately update the bit width and the tradeoff variable until all optimality conditions are met. To overcome the non-differentiability arising from discrete bit widths and considering that weight distributions are Gaussian or Laplacian, the framework leverages a well-known result from rate-distortion theory to provide closed-form derivative estimates during optimization. CVXQ adopts an interesting compounding (non-uniform) quantization, where weights are first projected to the sigmoid domain before applying uniform round-to-nearest quantization. A codebook is employed to enable dequantization via simple lookup, avoiding complex inverse computations. Tested across a wide range of model sizes in OPT and Llama2, CVXQ outperforms GPTQ, AWQ, and OWQ at 3- and 4-bit rates per weight in nearly all cases. Full implementation will be available soon here.

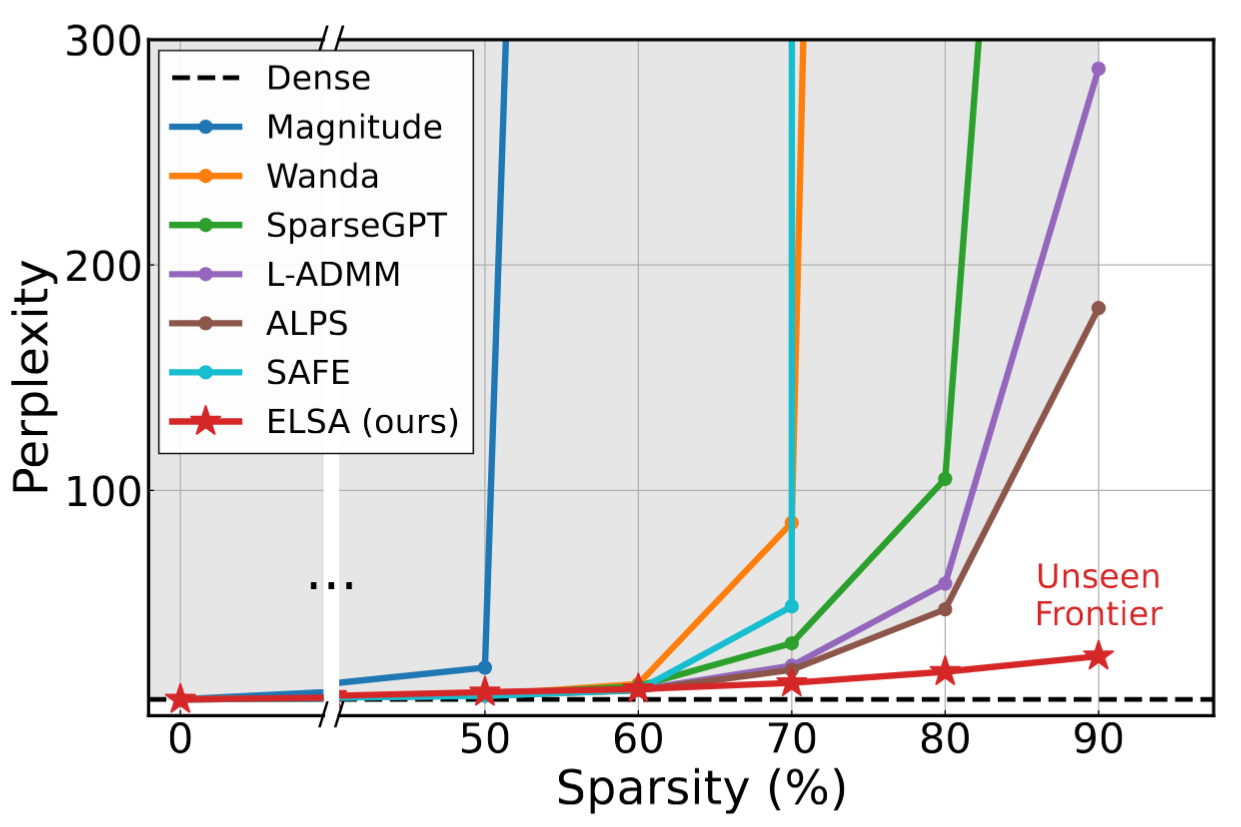

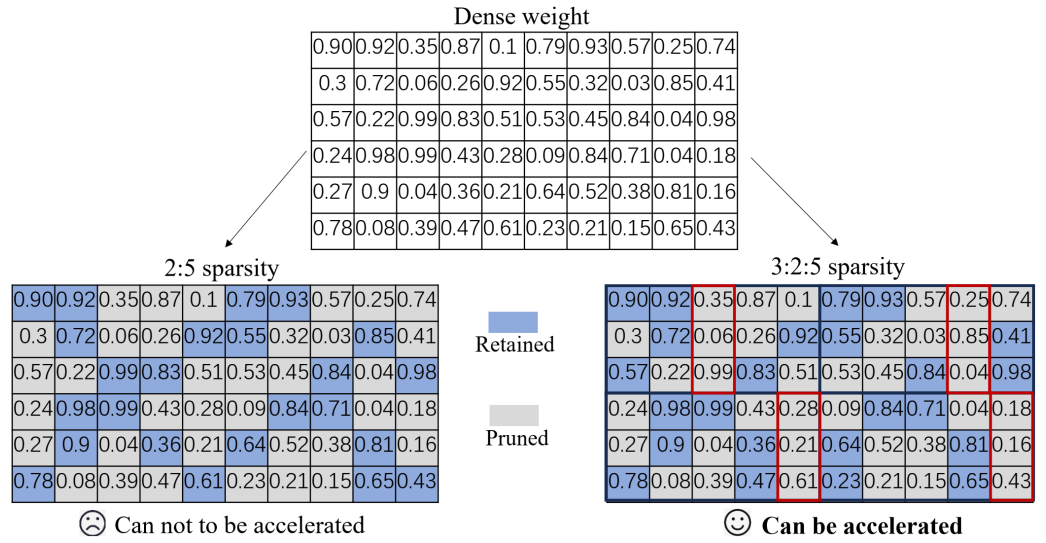

Pruning / Sparsity

- LazyLLM: DYNAMIC TOKEN PRUNING FOR EFFICIENT LONGCONTEXT LLM INFERENCE by Apple and Meta AI (https://arxiv.org/pdf/2407.14057). The paper introduces an LLM acceleration method that selectively computes the KV for tokens important for the next token prediction in both the prefilling and decoding stages. Contrary to static pruning approaches that prune the prompt at once, LazyLLM allows language models to dynamically select different subsets of tokens from the context in different generation steps, even though they might be pruned in previous steps. The method also introduces a concept of AuxCache to store the tokens that are omitted during the previous steps of text generation but required at the current step. Experiments on standard datasets across various tasks demonstrate that LazyLLM can significantly accelerate the generation without fine-tuning, e.g., prefilling stage of the LLama 2 7B model by 2.34x while maintaining accuracy.

- Compact Language Models via Pruning and Knowledge Distillation by Nvidia (https://www.arxiv.org/pdf/2407.14679). Authors propose compression best practices for LLMs that combine depth, width, attention, and MLP pruning with knowledge distillation-based retraining. They arrive at these best practices through a detailed empirical exploration of pruning strategies for each axis, methods to combine axes, distillation strategies, and search techniques for arriving at optimal compressed architectures. They use this guide to compress the Nemotron-4 family of LLMs by a factor of 2-4× and compare their performance to similarly-sized models on a variety of language modeling tasks. Deriving 8B and 4B models from an already pretrained 15B model using this approach requires up to 40x fewer training tokens per model compared to training from scratch; this results in compute cost savings of 1.8x for training the full model family (15B, 8B, and 4B).

- SQFT: Low-cost Model Adaptation in Low-precision Sparse Foundation Models by Intel Labs (https://github.com/IntelLabs/Hardware-Aware-Automated-Machine-Learning). This paper proposes an end-to-end solution for low-precision sparse parameter-efficient fine-tuning of large pre-trained models. It includes an innovative strategy that enables the merging of sparse weights with low-rank adapters without losing the sparsity induced in the base model, overcoming the limitations of previous approaches. SQFT also addresses the challenge of having quantized weights and adapters with different numerical precisions, enabling merging in the desired numerical format without sacrificing accuracy. Multiple adaptation scenarios, models, and comprehensive sparsity levels demonstrate the effectiveness of SQFT. Models and open-source code are available.

- ShadowLLM: Predictor-based Contextual Sparsity for Large Language Models by Cornell University and Google (https://arxiv.org/abs/2406.16635). Contemporary research on contextual sparsity primarily uses magnitude-based metrics to measure the importance of attention heads and neurons in LLMs. This paper aims to assess various importance metrics from the literature, including those based on(1) activation norm, (2) first-order gradient, (3) combination of norm and gradient, (4) second-order gradient, and (5) sensitivity-based metrics. The authors conclude that the PlainAct criterion – the L1-norm of the product of magnitude and gradient – emerges as the better metric by offering a robust sparsity-task tradeoff and learnability in importance rank. The authors also propose using just a single predictor, with the attention scores of the first transformer block as input, to forecast sparsity patterns for the entire LLM, as opposed to DejaVu, which requires predictors at regular intervals of transformer blocks. This innovation simplifies predictor training and implementation while also reducing inference overhead, achieving up to 20% faster generation than DejaVu across sizes of OPT family. Code is here.

- STUN: Structured-Then-Unstructured Pruning for Scalable MoE Pruning by SNU and Snowflake AI Research (https://arxiv.org/pdf/2409.06211). The work discovers a novel way to prune experts of MoE where the method reduces the complexity of expert selection from combinatorial O(kn/√n) down to O(1) using several greedy assumptions. The authors exploit the structure of router weight, applying clustering based on a so-called behavioral similarity metric to identify (dis)similar experts and utilize the centroid as pruned representation to compute a first-order Taylor approximation of the relative distortion. The entire expert pruning can be effectively run without any calibration data and unnecessarily on GPU, especially for the MoE with large numbers of experts. The work also found that expert pruning followed by unstructured pruning provides a better Pareto front. A key result on Snowflake Arctic, a 480B-parameter MoE with 128 experts, shows that STUN achieves 40% sparsity with minimal performance loss in just two hours using a single H100 GPU where unstructured pruning methods alone fall short.

Other

- Accuracy is Not All You Need by Microsoft Research, India (https://arxiv.org/pdf/2407.09141). The authors study the accuracy difference between compressed and source models. They claim that when the accuracy metrics are similar, they observe the phenomenon of flips, wherein answers change from correct to incorrect and vice versa in proportion. The authors conduct a detailed study of metrics across multiple compression techniques, models, and datasets, demonstrating that the behavior of compressed models as visible to end users is often significantly different from the baseline model, even when accuracy is similar. They further evaluate compressed models qualitatively and quantitatively using MT-Bench, showing that compressed models are significantly worse than baseline models in this free-form generative task. They argue that compression techniques should also be evaluated using distance metrics. Finally, the authors propose two metrics, KL-Divergence and % flips, and show that they are well correlated.

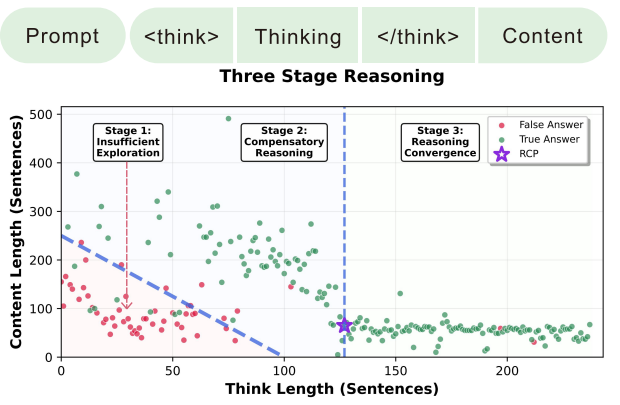

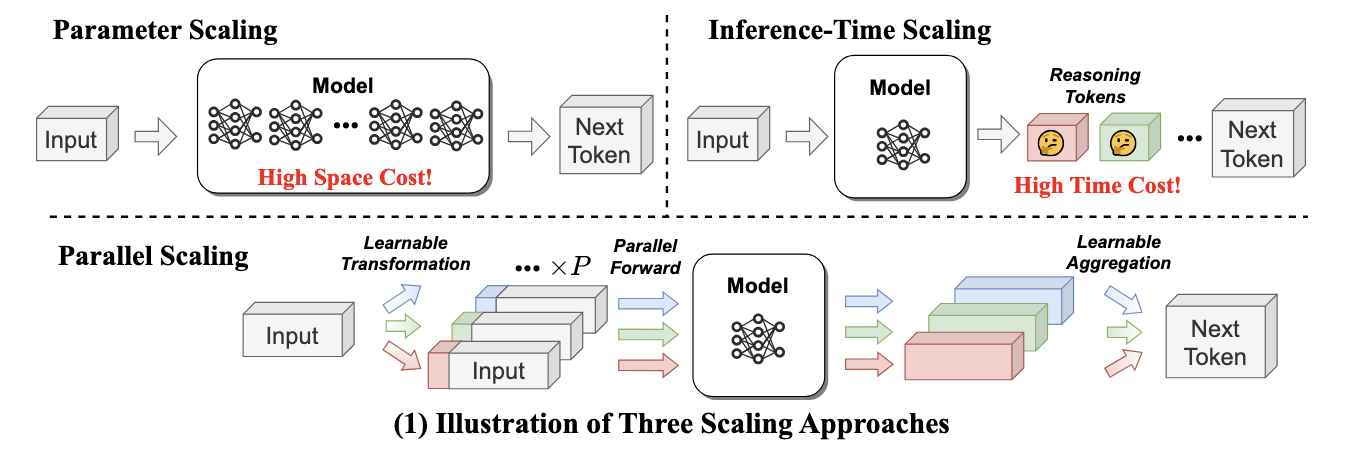

- Scaling LLM Test-Time Compute Optimally can be More Effective than Scaling Model Parameters by UC Berkeley and Google DeepMind (https://arxiv.org/pdf/2408.03314).The paper studies the scaling of inference-time computation in LLMs, focusing on answering the question: If an LLM is allowed to use a fixed but non-trivial amount of inference-time compute, how much can it improve its performance on a challenging prompt? Answering this question has implications not only on the achievable performance of LLMs, but also on the future of LLM pretraining and how one should trade inference-time and pre-training compute. Authors analyze two primary mechanisms to scale test-time computation: (1) searching against dense, process-based verifier reward models; and (2) updating the model’s distribution over a response adaptively, given the prompt at test time. They find that in both cases, the effectiveness of different approaches to scaling test-time compute critically varies depending on the difficulty of the prompt. This observation motivates applying a “compute-optimal” scaling strategy, which acts to most effectively allocate test-time compute adaptively per prompt. Using this compute-optimal strategy, authors can improve the efficiency of test-time compute scaling by more than 4x compared to a best-of-N baseline. Additionally, in a FLOPs-matched evaluation, they find that on problems where a smaller base model attains somewhat non-trivial success rates, test-time compute can be used to outperform a 14x larger model.

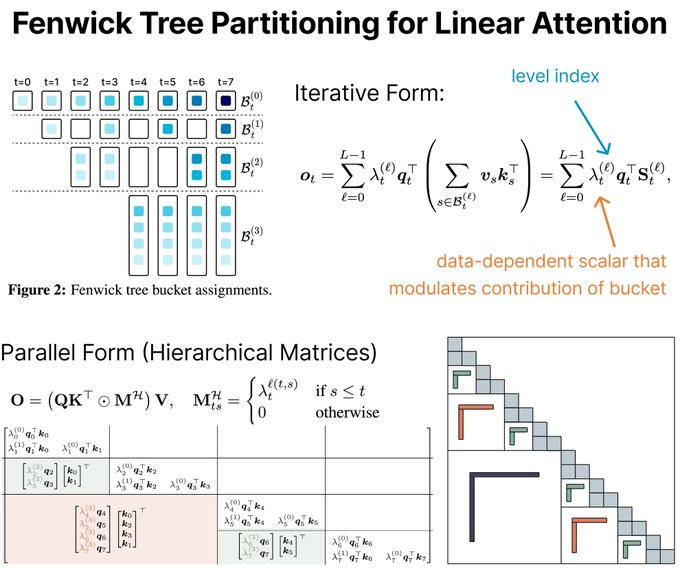

- Transformers are SSMs: Generalized Models and Efficient Algorithms Through Structured State Space Dual it by Tri Dao and Albert Gu (https://arxiv.org/abs/2405.21060). This paper discusses improvements to Mamba, the selective structure state space model (SSM) proposed as an alternative to Transformer-based models. The authors provide a framework called State Space Duality (SSD) that connects SSMs and variants of the attention mechanism. The Mamba-2 architecture is proposed, which obtains 2-8x speedup compared to the previous version of Mamba, and it is designed to be friendly to tensor and sequence parallelism. Experiments show that Mamba-2 outperforms Mamba and Transformer-based models in different model sizes. The authors also discuss hybrid models that can benefit from the combination of SSD with components from Transformer blocks.

Software

- A thorough analysis of performance and bottlenecks when using 4-bit KV cache on Nvidia with PyTorch: https://pytorch.org/blog/int4-decoding. Authors show step-by-step improvement when computing the Self-Attention operation of the Transformer block and compare results with CUDA and Flash Decoding baselines in 4-bit per-row and per-channel quantization settings of KV-cache.

- Llama.cpp introduced SIMD-friendly ternary weights packing/unpacking: https://compilade.net/blog/ternary-packing

- HuggingFace posted an exploratory work and recipes for ternary weights (1.58 bits) LLM weight compression: https://huggingface.co/blog/1_58_llm_extreme_quantization.

.png)