Enable ControlNet with Stable Diffusion Pipeline via Optimum-Intel

Authors: Tianmeng Chen, Xiake Sun

Introduction

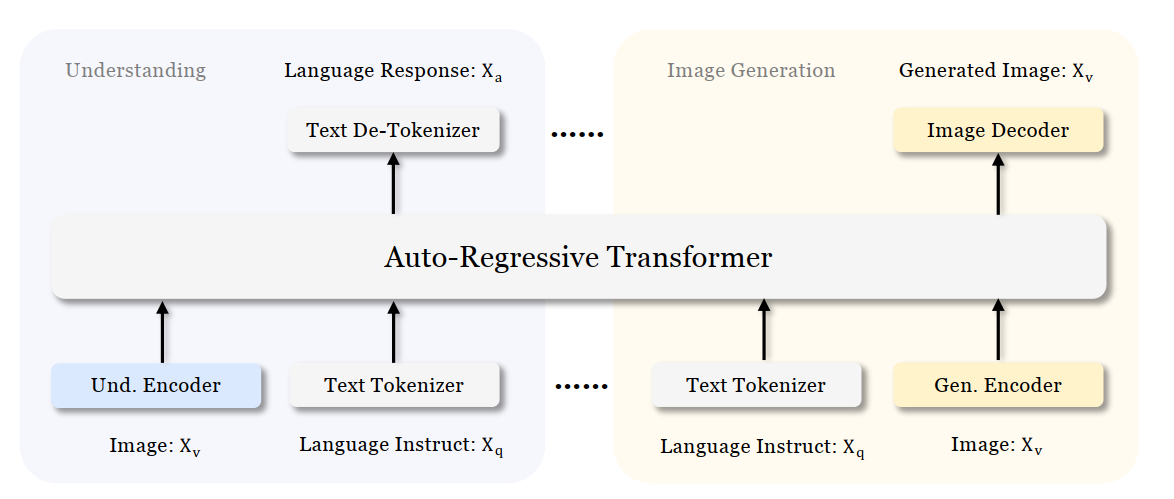

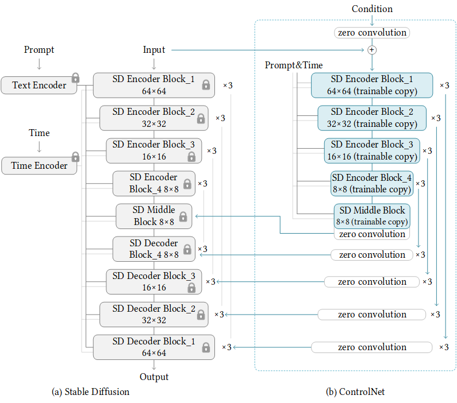

Stable Diffusion is a generative artificial intelligence model that produces unique images from text and image prompts. ControlNet is a neural network that controls image generation in Stable Diffusion by adding extra conditions. The specific structure of Stable Diffusion + ControlNet is shown below:

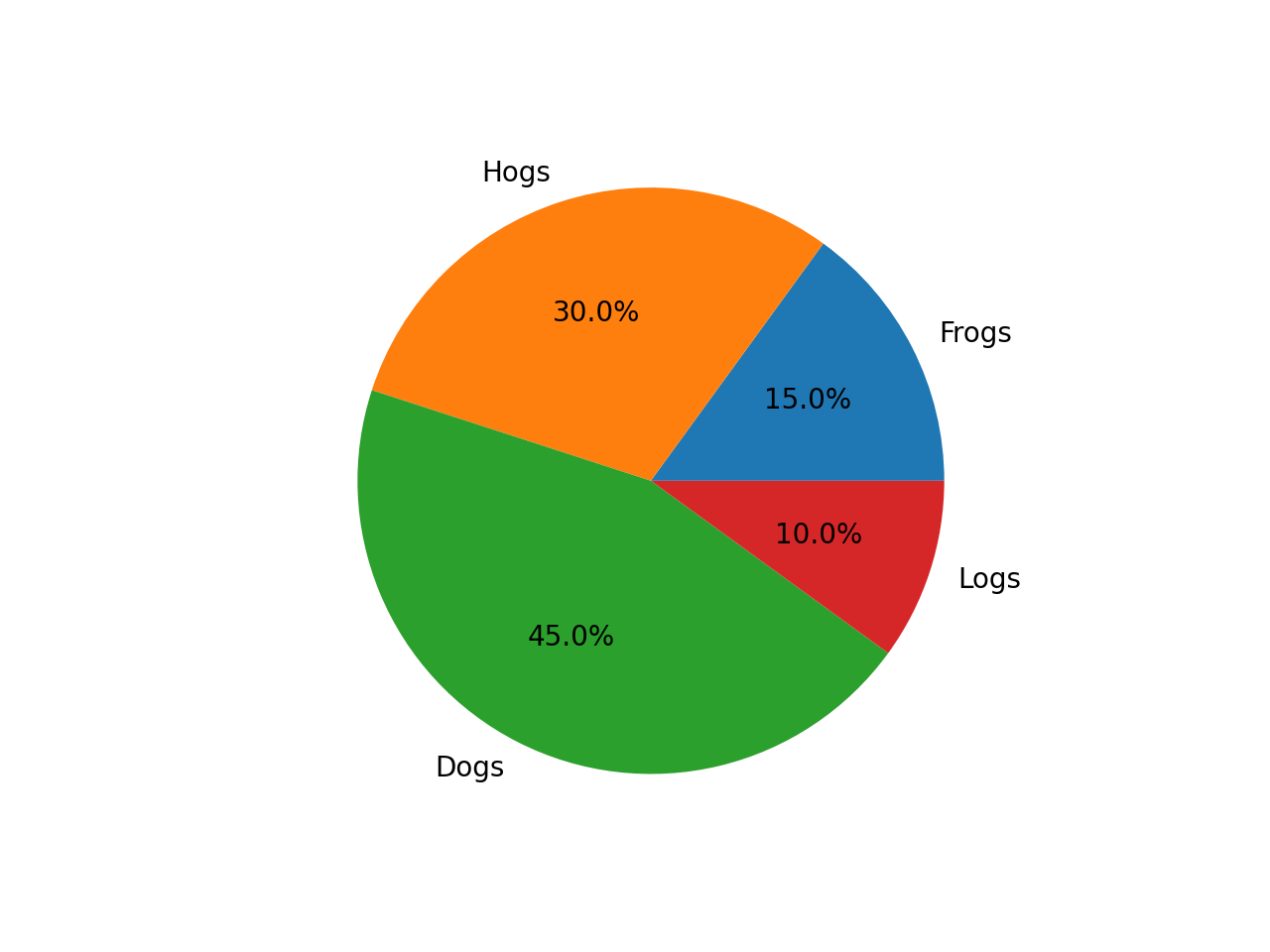

In many cases, ControlNet is used in conjunction with other models or frameworks, such as OpenPose, Canny, Line Art, Depth, etc. An example of Stable Diffusion + ControlNet + OpenPose:

OpenPose identifies the key points of the human body from the left image to get the pose image, and then inputs the Pose image to ControlNet and Stable Diffusion to get the right image. In this way, ControlNet can control the generation of Stable Diffusion.

In this blog, we focus on enabling the stable diffusion pipeline with ControlNet in Optimum-intel. Some details can be found in this open PR.

How to enable StableDiffusionControlNet pipeline in Optimum-Intel

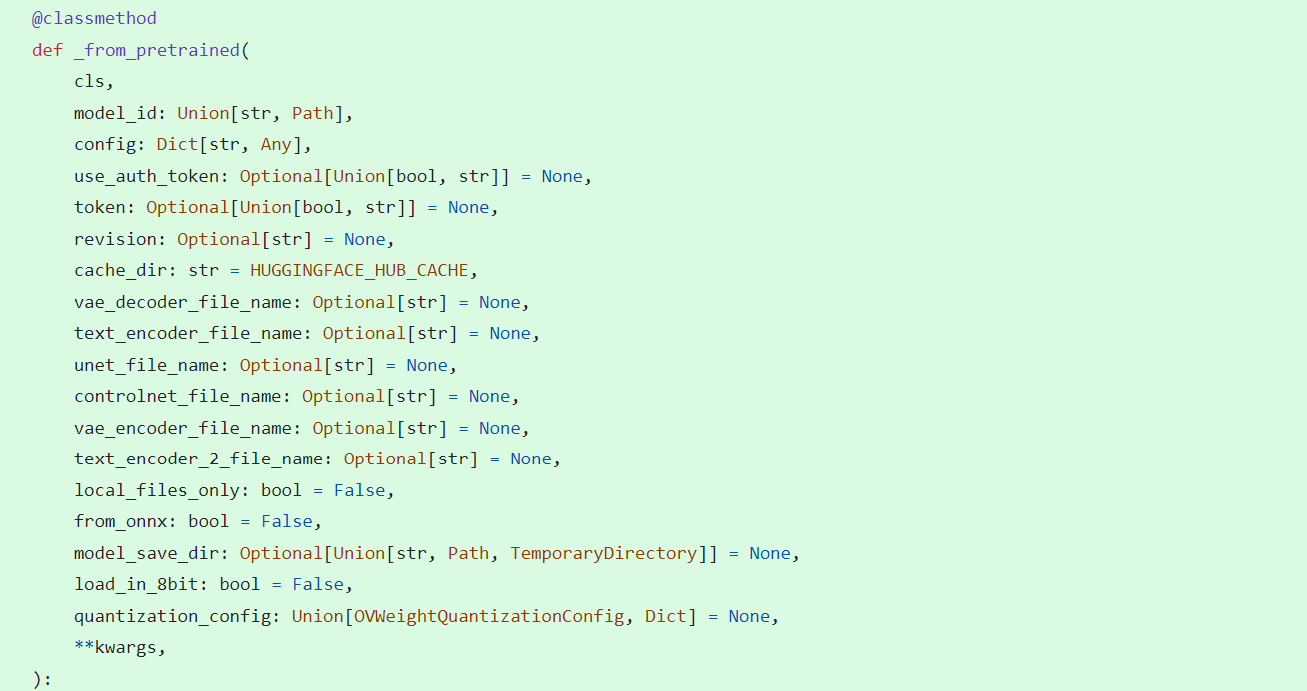

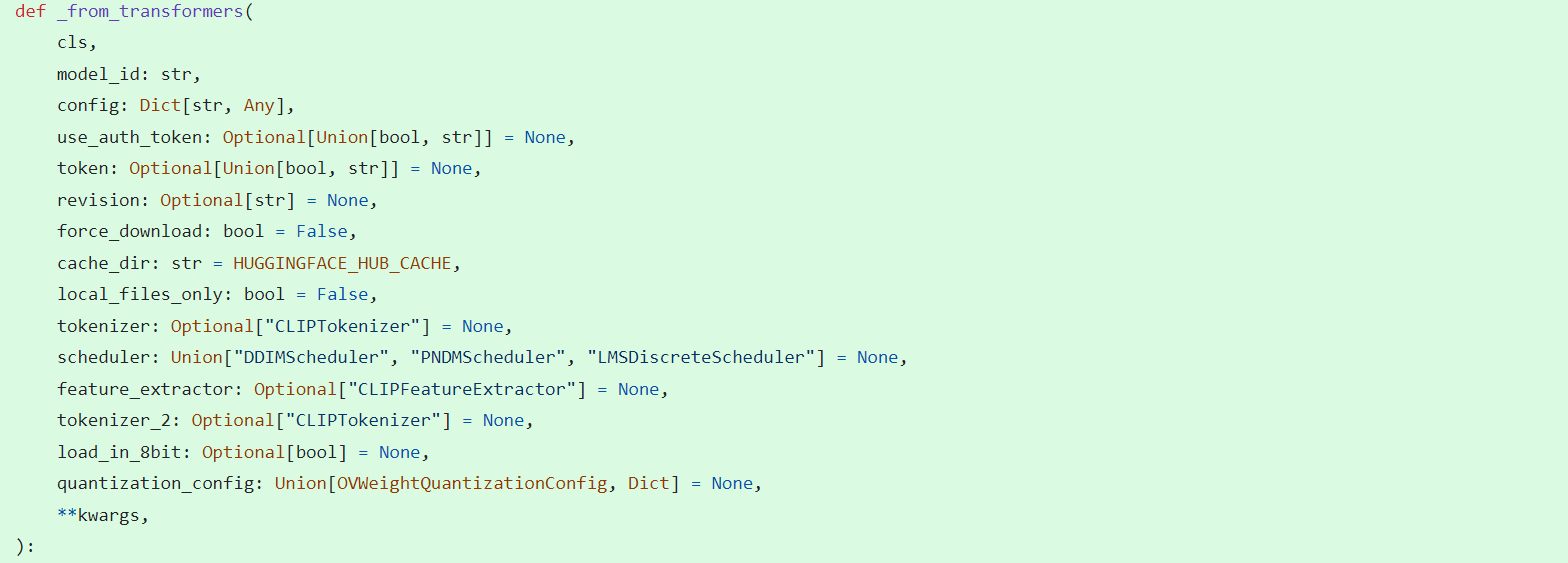

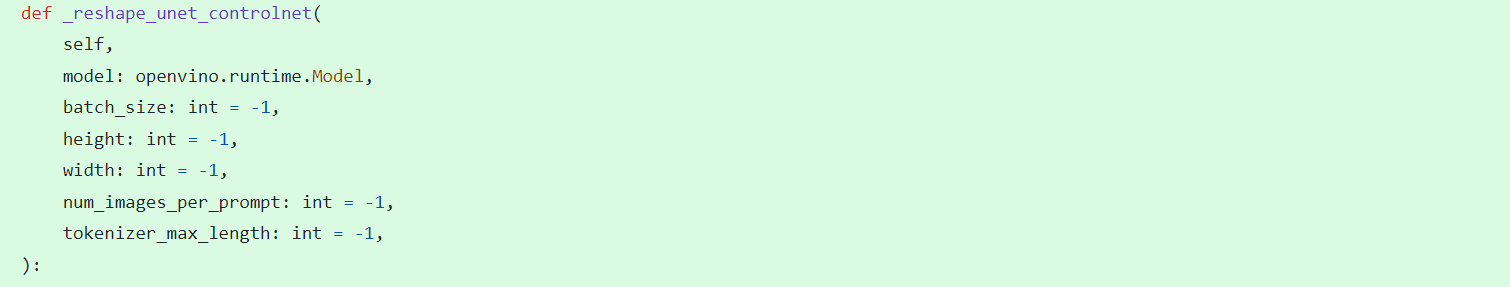

The important code is in optimum/intel/openvino/modelling_diffusion.py and optimum/exporters/openvino/model_configs.py. There is the diffusion pipeline related code in file modelling_diffusion.py, you can find several Class: OVStableDiffusionPipelineBase, OVStableDiffusionPipeline, OVStableDiffusionXLPipelineBase, OVStableDiffusionXLPipeline, and so on. What we need to do is mimic these base classes to add the OVStableDiffusionControlNetPipelineBase, StableDiffusionContrlNetPipelineMixin, and OVStableDiffusionControlNetPipeline. A few of the important parts are as follows:

_from_pretrained function in class OVStableDiffusionControlNetPipelineBase: initial whole pipeline from local or download.

_from_transformers function in class OVStableDiffusionControlNetPipelineBase: convert torch model to OpenVINO IR model.

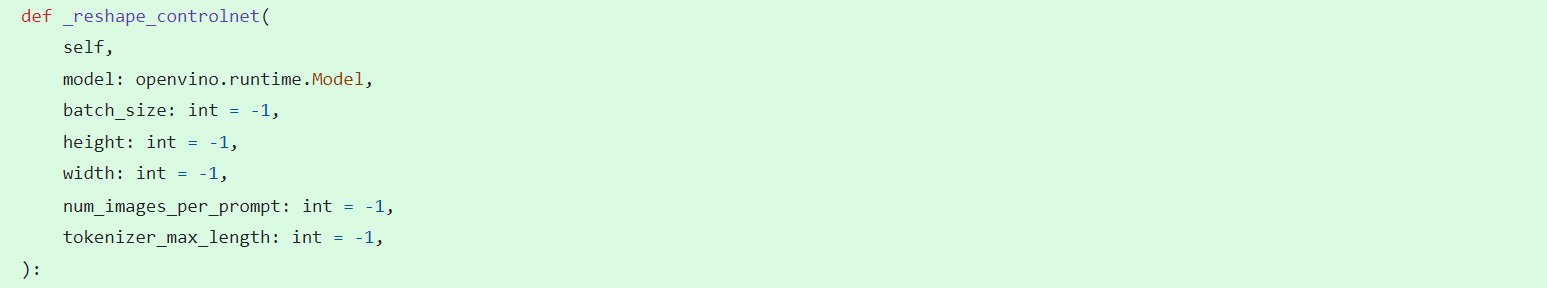

_reshape_unet_controlnet and _reshape_controlnet in class OVStableDiffusionControlNetPipelineBase: reshape dynamic OpenVINO IR model to static in order to decrease cost.

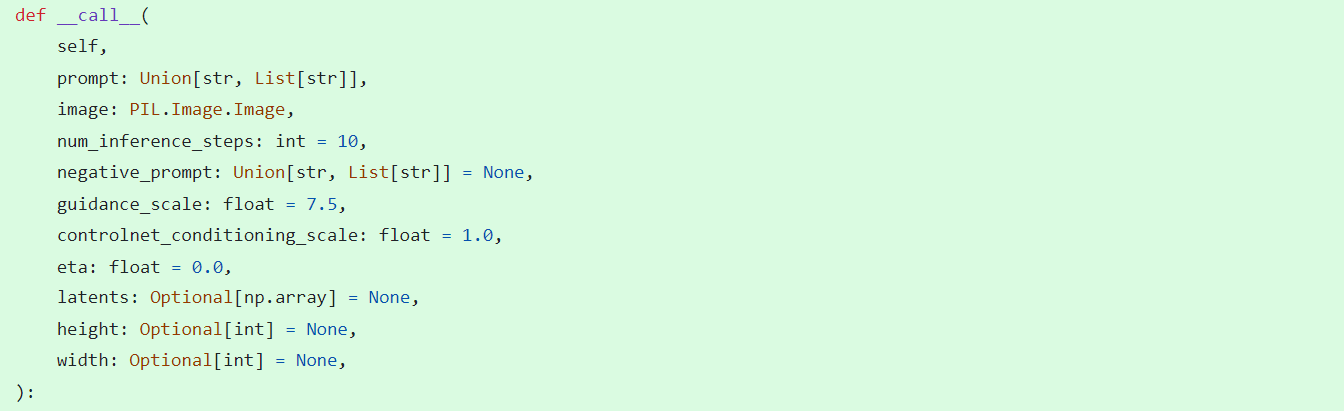

__call__ function in class StableDiffusionContrlNetPipelineMixin: do the inference in the pipeline.

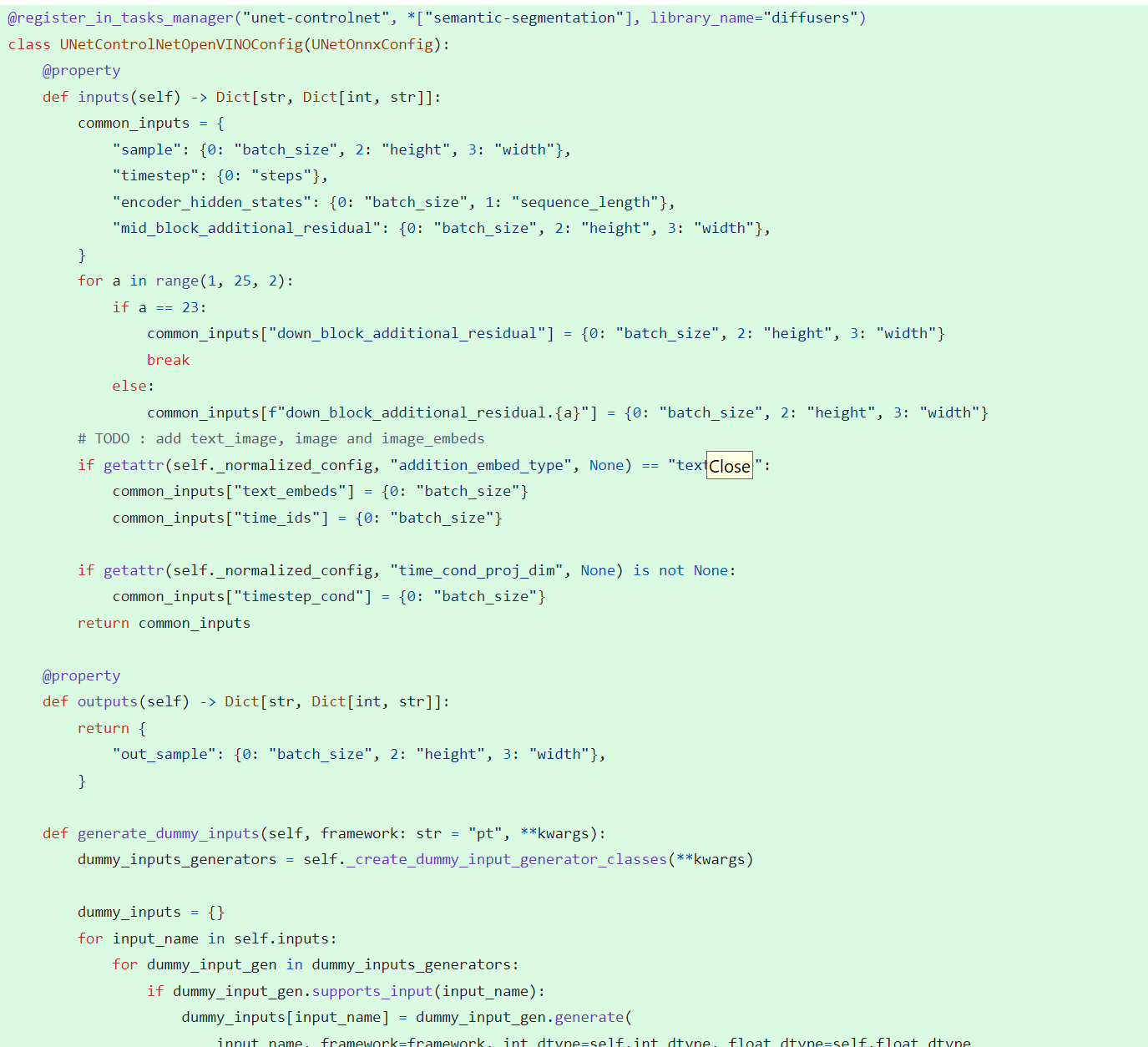

In model_configs.py, we define UNetControlNetOpenVINOConfig by inheriting UNetOnnxConfig, which includes UNetControlNet inputs and outputs.

By now we have completed the rough code, after which some very detailed code additions are needed, so I won't go into that here.

How to use StableDiffusionControlNet pipeline via Optimum-Intel

The next step is how to use the code, examples of which can be found in this repository.

Installation and update of environments and dependencies from source. Make sure your python version is greater that 3.10 and your optimum-intel and optimum version is up to date accounding to the requirements.txt.

# %python -m venv stable-diffusion-controlnet

# %source stable-diffusion-controlnet/bin/activate

%pip install -r requirements.txt

At first, we should convert pytorch model to openvino IR with dynamic shape. Now import related packages.

from optimum.intel import OVStableDiffusionControlNetPipeline

import os

from diffusers import UniPCMultistepScheduler

Set pytroch models of stable diffusion 1.5 and controlnet path if you have them in local, else you can run pipeline from download.

SD15_PYTORCH_MODEL_DIR="stable-diffusion-v1-5"

CONTROLNET_PYTORCH_MODEL_DIR="control_v11p_sd15_openpose"

if os.path.exists(SD15_PYTORCH_MODEL_DIR) and os.path.exists(CONTROLNET_PYTORCH_MODEL_DIR):

scheduler = UniPCMultistepScheduler.from_config("scheduler_config.json")

ov_pipe = OVStableDiffusionControlNetPipeline.from_pretrained(SD15_PYTORCH_MODEL_DIR, controlnet_model_id=CONTROLNET_PYTORCH_MODEL_DIR, compile=False, export=True, scheduler=scheduler,device="GPU.1")

ov_pipe.save_pretrained(save_directory="./ov_models_dynamic")

print("Dynamic model is saved in ./ov_models_dynamic")

else:

scheduler = UniPCMultistepScheduler.from_config("scheduler_config.json")

ov_pipe = OVStableDiffusionControlNetPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", controlnet_model_id="lllyasviel/control_v11p_sd15_openpose", compile=False, export=True, scheduler=scheduler, device="GPU.1")

ov_pipe.save_pretrained(save_directory="./ov_models_dynamic")

print("Dynamic model is saved in ./ov_models_dynamic")

Now you will have openvino IR models file under **ov_models_dynamic ** folder.

from optimum.intel import OVStableDiffusionControlNetPipeline

from controlnet_aux import OpenposeDetector

from pathlib import Path

import numpy as np

import os

from PIL import Image

from diffusers import UniPCMultistepScheduler

import requests

import torch

We recommand to use static shape model to decrease GPU memory cost. Set your STATIC_SHAPE and DEVICE_NAME.

NEED_STATIC = True

STATIC_SHAPE = [1024,1024]

DEVICE_NAME = "GPU.1"

Load openvino model files, if is static, reshape dynamic models to fixed shape.

if NEED_STATIC:

print("Using static models")

scheduler = UniPCMultistepScheduler.from_config("scheduler_config.json")

ov_config ={"CACHE_DIR": "", 'INFERENCE_PRECISION_HINT': 'f16'}

if not os.path.exists("ov_models_static"):

if os.path.exists("ov_models_dynamic"):

print("load dynamic models from local ov files and reshape to static")

ov_pipe = OVStableDiffusionControlNetPipeline.from_pretrained(Path("ov_models_dynamic"), scheduler=scheduler, device=DEVICE_NAME, compile=True, ov_config=ov_config, height=STATIC_SHAPE[0], width=STATIC_SHAPE[1])

ov_pipe.reshape(batch_size=1 ,height=STATIC_SHAPE[0], width=STATIC_SHAPE[1], num_images_per_prompt=1)

ov_pipe.save_pretrained(save_directory="./ov_models_static")

print("Static model is saved in ./ov_models_static")

else:

raise ValueError("No ov_models_dynamic exists, please trt ov_model_export.py first")

else:

print("load static models from local ov files")

ov_pipe = OVStableDiffusionControlNetPipeline.from_pretrained(Path("ov_models_static"), scheduler=scheduler, device=DEVICE_NAME, compile=True, ov_config=ov_config, height=STATIC_SHAPE[0], width=STATIC_SHAPE[1])

else:

scheduler = UniPCMultistepScheduler.from_config("scheduler_config.json")

ov_config ={"CACHE_DIR": "", 'INFERENCE_PRECISION_HINT': 'f16'}

print("load dynamic models from local ov files")

ov_pipe = OVStableDiffusionControlNetPipeline.from_pretrained(Path("ov_models_dynamic"), scheduler=scheduler, device=DEVICE_NAME, compile=True, ov_config=ov_config)

Set seed for Numpy and torch to make result reproducible.

seed = 42

torch.manual_seed(seed)

torch.cuda.manual_seed(seed)

torch.cuda.manual_seed_all(seed)

np.random.seed(seed)

Load image for ControlNet, or you can use your own image, or generate image with OpenPose OpenVINO model, notice that OpenPose model is not supported by OVStableDiffusionControlNetPipeline yet, so you need to convert it to openvino model first manually. Here we use directly the result from OpenPose:

pose = Image.open(Path("pose_1024.png"))

Set prompt, negative_prompt, image inputs.

prompt = "Dancing Darth Vader, best quality, extremely detailed"

negative_prompt = "monochrome, lowres, bad anatomy, worst quality, low quality"

result = ov_pipe(prompt=prompt, image=pose, num_inference_steps=20, negative_prompt=negative_prompt, height=STATIC_SHAPE[0], width=STATIC_SHAPE[1])

result[0].save("result_1024.png")

.png)